Logfile generated by web-servers such as Nginx, Apache, etc contains information that the widely used Analytics tools i.e. Google Analytics won’t display. For example, Google Analytics won’t display the IP address of the bot or the user.

Log files contain a timestamp, HTTP protocol, request type, URL, status code, IP address, etc. Analyzing the log file data manually can be time-consuming. Here’s when the Website Crawler tool comes into the picture.

With the log file analyzer tool of WC, you can see what URLs search bots are crawling or the IP addresses of the users that have visited the website. You can also see the URLs that people/bots are visiting the most.

The Website Crawler’s Log File Analyzer tool displays the following important information:

- Links with HTTP status code 200, 404, etc.

- The number of times bots have visited your site.

- Number of URLs present in the log file, and more.

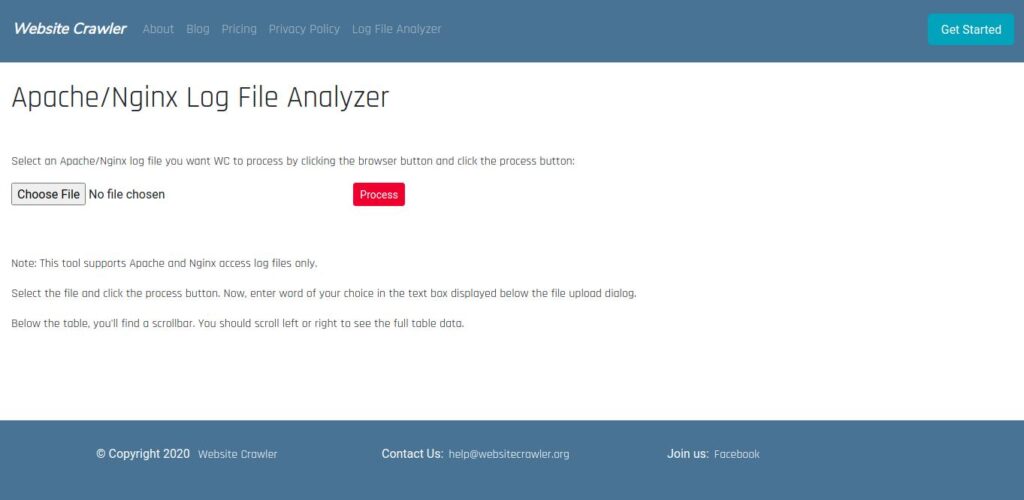

How to use the log file analyzer tool?

Click the “Choose File” option, and select the access log file on your PC. If you don’t have the file, get it from the server. Once you choose the file, click the “Process” button. You’ll now see some vital details, a table containing the log file data, and a textbox.

Log File Analyzer’s filter

You can filter data by entering a word in the textbox you’ll below the file upload option. For example, if you want to see the list of URLs crawled by Googlebot, enter “Googlebot” in the textbox that you’ll find below the file selector/upload option. Once you enter the word, you’ll see the filtered data.

Although WC is capable of reading large files, it will process only 100 lines of log files uploaded by free account holders/unregistered users. If you’ve got a silver account, WC will process 2500 URLs.

Note: Website Crawler won’t save the file data or the entire file on the server. The file will be discarded once you’ve exited the page/closed the tab in which you’ve opened the tool.

Leave a Reply