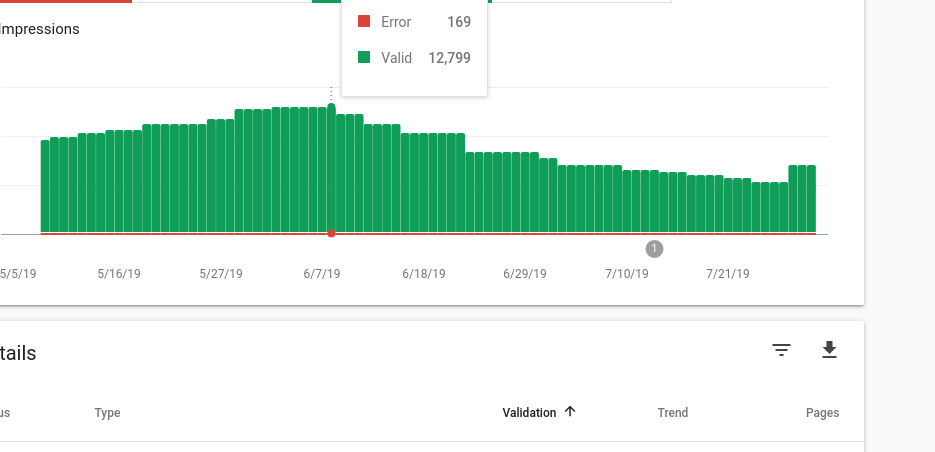

Google dropping 1000s of pages from its index is a nightmare for bloggers, webmasters, developers, and online business owners. One of my sites has around 28000 pages. Google had indexed around 12000 pages of this site but in the last few months, it started dropping the pages from its index.

If you’re following SEO news closely, you might know that Google De index bug has been a talk of the town of late. This bug has affected several large websites. I ignored the issue of “deindexing pages on my website” thinking that the Google De index bug may be responsible for it. This was a dreaded mistake.

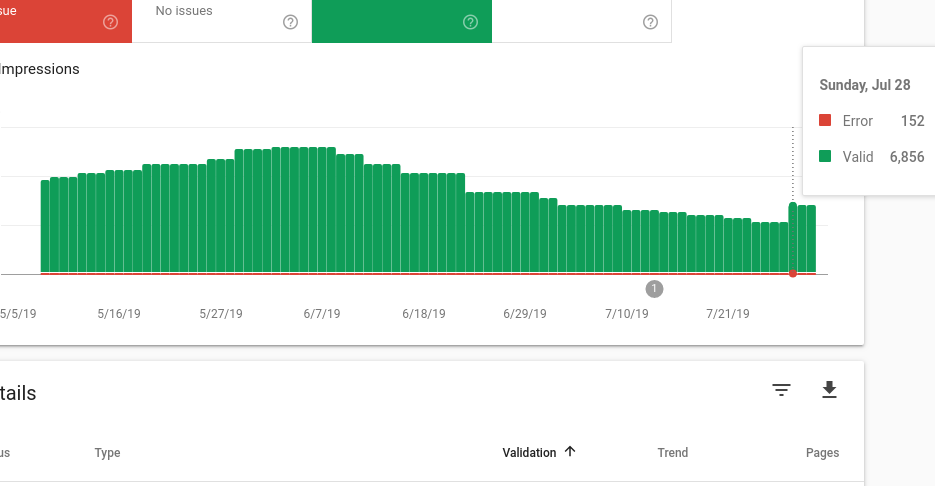

Google kept on dropping pages of my site from its index. A few weeks after spotting the issue, I re-checked the coverage report of Google Search Console hoping that Google may have fixed the De Index bug. I was shocked to find that the total indexed pages were now 5670 (From 12000, the count of indexed pages dropped to 5670).

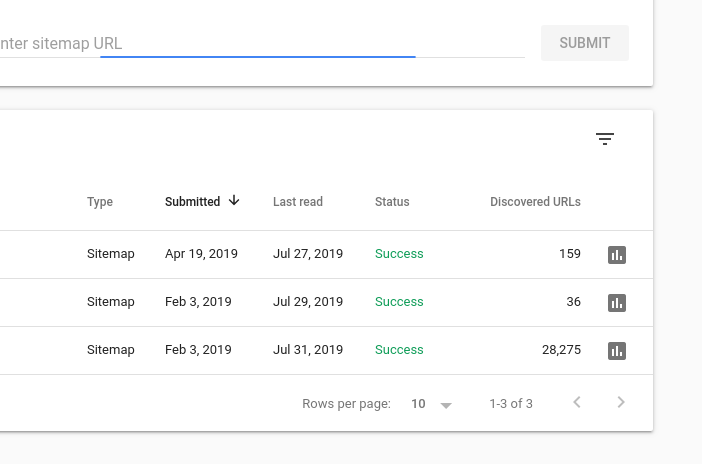

Sitemap

Did Google De Index bug affect my site?

No, it was a technical issue.

How I found and fixed the De Indexing issue?

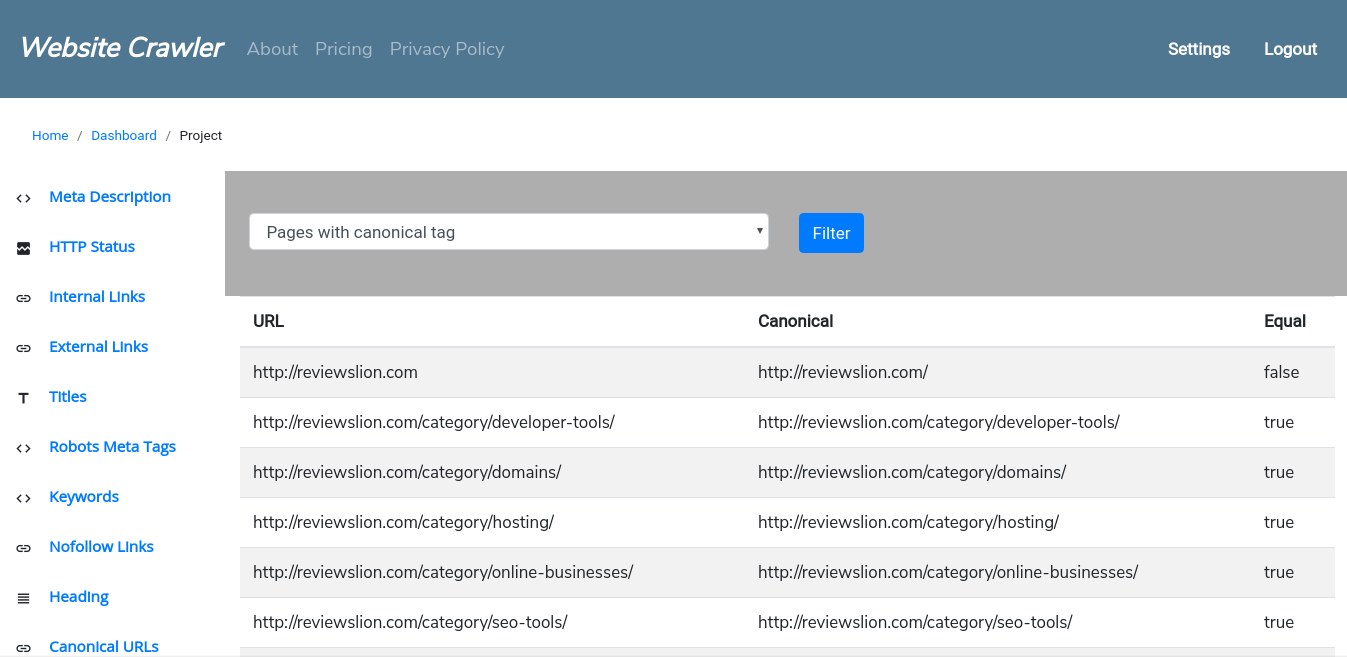

I ran Website Crawler on my affected site. Then, I logged into my account. The first report I checked was the “Meta Robots” tag report. I was skeptical that the pages are being deindexed because one of my website’s function was injecting meta robots noindex tag in the website’s header but I was wrong. This report was clean. Then, I opened the “HTTP Status report to see whether all the pages on the site were working or not. The HTTP status for each page on the site had the status “200”. The next report I checked was the “Canonical Links” report. When I opened the report, I was shocked to find that several thousand pages of the affected website had an invalid canonical tag.

A few days after fixing the issue Google started indexing the dexindexed pages

Tip: If Website Crawler’s Canonical Links report interface displays false instead of true in the 3rd column, there’s a canonical link issue on the page that is displayed in the same row. See the below screenshot:

How does the report look like?

The issue on my site

The valid syntax for canonical links is as follows:

<link rel="canonical" href="" />

I mistakenly used “value” instead of “href” i.e. the canonical tag on my site looked like this:

<link rel="canonical" value="" />

The “Value” didn’t make sense and it confused Googlebot, Bingbot and other search bots. I fixed this issue and re-submitted the sitemap. Google started re-including the dropped pages once again (see the 3rd screenshot from the top).

Conclusion: If Google is de-indexing 100s or 1000s of pages of your website, you should check the canonical and robots meta tags of the pages of your website. The issue may not be at Google’s side but a technical error like the one I’ve mentioned above may be responsible for this.

Leave a Reply